One of the keys to controlling vibration is being able to accurately measure it. A few years ago, this would have meant buying expensive instrumentation and spending days, if not weeks, learning how to set it up. But today, thanks to PCs and such developments as multitasking operating systems, the PCI bus, and Plug and Play technology, things are a lot easier.

All you need to measure vibration is a PC, a sensor, a plug-in board, and a basic understanding of dynamic signals and how to condition and process them. Even if you've never seen a vibration spectrum before, you'll probably find that within a few minutes of getting a display on the screen you'll be "seeing" things in your design you never knew were there -- things like resonant peaks, environmental effects, and subtle interactions between components. With this new-found knowledge, you'll have those annoying rough spots worked out in no time.

Working smarter

This may sound familiar. You're working on a project. You think you're a "few minor adjustments" away from the speed and precision that initially seemed an impossible goal. You switch bearings to get a little more rpm, but it throws off the machine's ability to hold position. You counter by reprogramming a digital filter in the controller, but it only makes things worse. Before you know it, you're back to square one, re-verifying everything you assumed to be true.

Now try it another way. Instead of just swapping components and crossing your fingers, run a dynamic signal analysis on your prototype. After you've located the resonances, find out what's causing them. Any modifications from here should reduce the unwanted vibrations, or at least mitigate their effect. Even if you have to take a few steps back to fix the problem, what you'll have learned in the process will undoubtedly help you make better decisions in the future.

The ability to measure vibration is a must these days. Vibration is the key to higher machine productivity -- whether you're talking about a wafer stepper, an MRI system, or a milling machine for aerospace-quality parts. And it's not just because of the speed and precision issue. Reducing vibration extends component life, minimizes thermal effects, and saves energy. It even improves ergonomics. Fortunately, with today's powerful PCs and the availability of low-cost signal acquisition hardware and software, practically every motion-system engineer can achieve such improvements.

Pick it up

Vibration measurements start with a sensor. Usually it's some sort of accelerometer used in tandem with a tachometer, but it could also be a microphone or noncontact displacement "probe." What you use depends on what you're testing and what you're trying to learn.

Accelerometers: Accelerometers convert acceleration directly into an electrical signal. This signal can be used to measure vibration; it can also be integrated to measure velocity and displacement.

Accelerometer output is frequently expressed in terms of g's, or gravitational units. Vibration specifications, on the other hand, are almost always expressed in metric units, m/sec2. To convert, use the relation g = 9.81 m/sec2, or 10 m/sec2 if you can live with a 2% error.

Accelerometers are available in several configurations. Some measure along one axis. Others, triaxial types, sense acceleration simultaneously along three orthogonal axes. Usually triaxial types are just a combination of single-axis units.

Like many instrumentation sensors, accelerometers must be calibrated. This is typically done with a handheld shaker that generates a constant, known level of acceleration at a particular frequency.

Tachometers: Tachometers are used to measure angular velocity and position, and can be quite helpful when testing rotating equipment.

"Tachs" generally require the addition of a toothed wheel, or gear, that mounts on the test shaft. As the shaft turns, the tachometer counts gear teeth using optical or magnetic means. An optical pick-up looks for transitions between reflective to nonreflective regions, while a magnetic pick-up responds to inductive variations associated with proximity. In some instances, rather than a gear, a small stripe of reflective tape may be attached to the shaft, generating one pulse per revolution. The advantage here is that you haven't added any real mass to the test shaft.

Whatever the case, the information is encoded in a pulse train. Instantaneous angular speed, for example, is directly related to the time interval between two consecutive pulses. If it has a reference point, say a missing tooth on the wheel, a tachometer can also measure absolute angular position.

Displacement probes: Displacement "probes" employ noncontact methods to measure relative motion between a shaft and its mount. They are particularly useful for measuring low-level acceleration, as is the case in rotating machines with flexible bearings.

In the case of magnetic probes, high-frequency oscillators generate eddy currents in the shaft. The energy of the current is a function of the distance between the shaft and probe. As the distance changes, more or less energy is drawn from the oscillator, in effect, modulating the drive voltage. Getting an output signal proportional to displacement requires a simple demodulation and the removal of some dc offset.

Other tools: Another vibration sensing technique relies on instrumented hammers. Striking the hammer against the test device generates wide-band excitation that can be used for modal analysis. This is typically used in conjunction with accelerometers mounted in the normal way.

Other sources of controlled excitation are shakers and loudspeakers. These can be used to generate specific vibrations such as sine waves, white noise, chirp signals, and so on. They can also reproduce, in a controlled environment, conditions previously recorded during actual use of the part under test.

Getting in shape

Raw sensor signals must be "conditioned" before they can be analyzed. This pre-processing stage takes care of such issues as aliasing and spectral leakage.

According to Shannon's sampling theory, the highest (Nyquist) frequency that can be analyzed in a sampled signal is fn = fs/2, where fs = sampling frequency. Any analog frequency greater than this will, after sampling, appear as an "alias" frequency between 0 and fn.

In the digital (sampled) domain, there's no way to distinguish alias frequencies from real ones, so they need to be removed from the analog signal before it's sampled by the a/d converter. For this, you would use an anti-alias filter. An ordinary low-pass (analog) filter will work fine, provided it has a flat in-band frequency response and fast roll-off.

Choosing the cut-off frequency requires that you take into account not only the sampling rate, but the type of a/d converter as well. Systems that use sigma-delta converters, for example, typically start their cut-off at 45 to 50% of the sample rate. Because of the way sigma-deltas work, the filter is very sharp and automatically tracks with sample rate.

Another phenomenon associated with sampling is spectral leakage. In practical applications, you can only record a finite number of samples before running out of storage space. When these data are used for frequency analysis, the processing algorithm assumes that the time record repeats indefinitely. In other words, it operates as if the original signal was an endless string of repetitions of the time record.

If by some chance you happen to capture an integral number of cycles in your time record, the reconstructed signal will be smooth and continuous and any spectral analyses will yield fairly good results. More frequently, however, you'll capture a nonintegral number of cycles. In this case, the reconstructed signal will exhibit discontinuities at the boundaries between each time sample. These bumps tend to corrupt the results of any further processing, causing a smearing or leakage of energy from the actual frequency to all other frequencies. The severity depends on the amplitude of the discontinuity.

One way to minimize the effect of spectral leakage is to smooth the discontinuities prior to processing. This is commonly done using a technique called windowing, in which the timedomain signal is multiplied by a waveform whose amplitude tapers gradually and smoothly toward zero at both edges.

Extracting information

If you plan to measure vibration, you need to be familiar with standard signal-processing techniques, including frequency analysis (single and dual channel), averaging, and order analysis.

Because of the nature of vibration, a lot of the information it contains is a function of frequency rather than time. Getting to and analyzing this information, then, usually requires converting time-based data to the frequency domain.

Modern frequency analysis is based on Fourier's theorem, which states that any stationary time-domain wave can be approximated by a weighted sum of sines and cosines. Time-based signals, therefore, can be represented in the frequency domain as a series of amplitude and phase values, each specified at a particular frequency.

The most widely used signal-processing method to convert sampled data to the frequency domain is called a fast Fourier transform, or "FFT." In an FFT, each frequency component is the result of a dot product between the time signal and the complex exponential (sine and cosine) at that frequency. Thus, every frequency component has a magnitude and phase.

The phase is relative to the start of the time record. As a result, singlechannel phase measurements are stable only if the input signal is triggered. Dual-channel measurements, on the other hand, compute phase differences between channels, which eliminates the need for triggering.

Most people are more familiar with magnitude plots than phase plots. Magnitude, at any frequency, is the square root of the sum of the squares of the real and imaginary parts of an FFT.

The use of FFTs implies two important relationships. The first relates the highest frequencies that can be analyzed to the sampling frequency. The second links frequency resolution to the total acquisition time. The longer the acquisition time, the better the resolution.

An effective way to improve accuracy is by averaging successive measurements. Averaging is usually performed on individual spectra, rather than the time record.

One type of averaging, RMS averaging, computes the weighted mean of the sum of squared values. Though it reduces fluctuations in the data, it doesn't lower the actual noise floor.

Vector averaging, in contrast, uses the entire FFT spectrum. The real part is averaged separately from the imaginary part. As a result, the noise floor can be reduced for random signals, as they are not phase-coherent from one time record to the next.

It's important to note that vector averaging requires a trigger for single- channel measurements. That way, real and imaginary parts of the signal will add in-phase instead of canceling randomly.

For some applications, a form of averaging called peak-hold may be more appropriate. This method retains the peak spectral magnitudes for each frequency. Peak values are stored in the original complex form so that all forms of the data can be displayed.

As with all analytical techniques, you should proceed with caution before drawing any conclusions from signal processing operations. Some software even includes tools for "testing the test." A prime example is the coherence function. Coherence tells you how much power in the output signal is caused by the input. If there's no noise in your system, all output power should come from the input and the coherence function would be at its maximum value, 1.0.

Order analysis

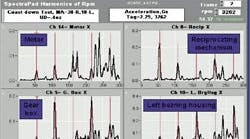

Order analysis, another processing technique, is particularly handy for rotating equipment. Here, noise and vibration are often a function of rotational speed through mechanisms such as gear mesh, timing chains, bearing defects, imbalances, misalignment, resonance, and cam irregularities. The resulting vibration signatures include a complex array of fundamental and sideband frequencies and are often difficult to analyze.

Order analysis helps in such cases by expressing vibration as a function of orders – harmonics of rotation that include integer as well as non-integer multiples of the fundamental frequency. The idea is to lock the sampling time to constant shaft positions, so that when rotational speed changes, the original signal is no longer sampled at constant time intervals, but at constant angle intervals.

There are two ways to obtain samples at constant shaft positions. One way, external sampling, relies on the measurement hardware to lock the sampling times to constant shaft positions. Another method, digital resampling, interpolates the original data acquired at constant sampling frequency, generating a resampled signal with data points at constant shaft positions.

Applying an FFT on this signal, then, transforms the angular domain into the "order" domain. The resulting spectral lines represent constant orders instead of constant frequencies.

The use of FFTs to transform the angular domain to the order domain leads to some important relationships. The highest order Om that can be analyzed, for example, is directly related to the angular sampling frequency, Om = Φs/2, where fs = angular sampling frequency (samples/ rev). Order resolution is given by ΔO =Φs/N, where N = number of samples used to compute the FFT.

A good example that illustrates the use of order analysis is an engine run-up test. Consider a four-cylinder internal combustion engine. The major sources of noise and vibration are related to the combustion cycle which occurs twice per revolution. Obviously, any vibration measurements will include a strong contribution from the second order.

Time and time again

Sometimes the "snapshots" you get from processing-intensive measurements just don't tell the whole story. The real information, the nature of the signal, is in the trending or temporal variations. For this, the solution is a data-processing function called joint time-frequency analysis (JTFA). Simply put, JTFA describes how a signal's frequency content changes over time.

Unlike classical processing methods that force you to analyze signals in either the time or frequency domain, JTFA lets you look at signals in the time and frequency domains simultaneously. There are a couple of ways it can be done. If the frequency of interest doesn't change rapidly, your best bet is to use short time Fourier transforms (STFTs).

If you don't know a lot about your signal, you're probably better off using a Gabor spectrogram. Start with a low-order analysis, then increase the order until the joint-time frequency resolution is satisfied. In most cases, the order will be between two and four.

A good example of where you might use a Gabor JTFA is to analyze a gear drive you suspect of causing vibrations. If you see repetitive patterns in the spectrogram at some multiple of the meshing frequency, they may be caused by the modulation of eccentricities in both pinions. In addition, a "hot spot" at the meshing frequency may be telling you that the teeth profiles in both pinions are damaged and worn.

Thierry Debelle is a Senior Software Engineer with National Instruments, Austin, Tex.