Smart Manufacturing Looks to Advances in 3D Machine Vision

So, you’ve just installed a robot in your plant to automate your entire process. In terms of bringing your process to the state of modern technology, that should be it. But David Dechow, a 3D machine vision and intelligent robotics expert for FANUC Robotics, would disagree.

3D machine vision is the ability for automation to detect objects, analyze distance, and adjust its system processes on the fly. “3D machine vision is a revolution in imaging sensors,” said Dechow. “If you are not prepared to move this technology in 5 to 10 years or less, you will be behind the curve.”

At the Pacific Design & Manufacturing Show, Dechow gave a presentation on “Advances in 3D Machine Vision for Robotic Manipulation” as part of the Smart Manufacturing Innovation Summit. Machine vision in these terms is a factory floor automation. It is becoming more mainstream in flexible automation and smart manufacturing. The basics behind 3D machine vision is more than just about making the image: It is the ability to recognize and create a new set of work parameters on what is being observed.

To make the image one can use the single point method (which provides the location of where an object is located in a 3D space,) or the more familiar process (using a cloud of points where every point in the image represents an XYZ coordinate). The points would be the ridges of the structured body rather than the pixels that comprise that image. In a point cloud, you have to combine the data to get a result.

The basics of machine vision rely on stereo or multiple camera techniques to capture images. Just like your eyes, using multiple cameras allows you triangulate points in space. Correspondence problems for stereo imaging for non-textured images like a tablecloth are solved by using structured lighting. At the end, you get a 3D snapshot that can be analyzed by the image-capturing software.

The advances in this technology have been spearheaded by consumer technology. According to Dechow, your next smartphone will have a 3D imaging camera. These cameras are being used today to identify the size and shape of packages. Future innovations will allow 3D scene analysis, 2D processing on a 3D image, and machine learning applications where a robot will be able to objectively learn in real time.

One example would be a robot optimizing its grip. Depending on the object it intends to grab, it adjusts its strength based on the image it captures, analyzing whether the object is strong or fragile.

The smart manufacturing sector today is underutilizing 3D imaging. Dechow predicted that the technology will be used for inspection purposes—e.g., defining defects in products and robotic guidance so that the robots can identify human counterparts. By the robot identifying human counterparts, they can work with them to actually collaborate on common tasks.

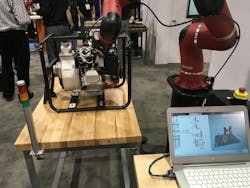

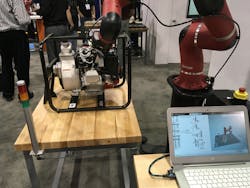

While we may still not be taking full advantage of machine vision, you can see use cases that are taking advantage of machine vision on the Pacific Design show floor. Rethink Robotics’ Sawyer and its latest Intera Software is demonstrating how camera technology and robots can work together. The video below highlights how with the use of Cognex machine vision system, Sawyer is able to provide visual inspection of parts and labels on the engine to ensure they are properly located and perform proper installation checks.

By pulling with the right amount of force on the spark plug cable, Sawyer uses its force-sensing technology to ensure the spark plug is installed correctly. If any of the labels, bolts, or spark plug cables are missing or improperly installed, it offers a false feedback that there is a problem. This combination of machine vision, robotics, and force-sensing capabilities provides a completely automated process.