Like information invisibly encoded in strands of DNA, engineered works — bridges, dams, aircraft, disk drives, motors, and so on — are characteristically imprinted with math. With few exceptions, math is the foundation as well as the structure for all technological achievement; and on the wings of computers, it may carry us farther than anyone ever imagined. Take a journey along the evolutionary path of mathematical analysis, and you see not only the past but the future.

From maps to Mars

Mathematical modeling, in one form or another, is behind virtually all technological progress since the beginning of scientific discovery.

Follow the trail of Euclidean geometry, for example. Starting in ancient times and extending through the Renaissance, it has lead to such technological advancements as surveying, mapping, navigation, and astronomy. Granted the tools and methods were primitive by today’s standards, but they allowed man for the first time to explore the extent of his own universe.

The next big leap was the development of tools to model time-variant events. In the 17th century, mathematicians worked out the theory to describe real-world (dynamic) phenomena — motion. The breakthrough came from Newton and Leibnitz, who independently developed differential calculus.

One feat in particular demonstrated the power of the new math. With a relatively simple model, Newton not only reproduced known planetary orbits, he predicted future positions. The accuracy of his celestial mechanics is impressive by any measure and it had broad repercussions, challenging man’s view of the universe and his role in it.

Rather than attempting to describe a planetary orbit as a static curve, Newton’s revolutionary invention of a dynamic model for motion expressed how changes in velocity are related to position. The model, as Leibnitz said it must be, was a set of differential equations expressing the relationship between position and its derivatives.

A stone’s throw

Dynamic equations, while facilitating analysis of complicated systems, presented their own set of challenges. Suppose you want to model the trajectory of a rock thrown through the air. Using Newton’s Law of Motion, and assuming gravity is constant near the earth’s surface, the differential equation describing the stone’s height u as a function of time is:

where the constants a and b must be determined from a known position and velocity corresponding to a given time. In the 18th century, this was about as far as one could take it.

Few dynamic problems are as simple as projectile motion, however. Consider the motion of planets in our solar system. The main influencing force, as Newton pointed out, is interplanetary gravitational pull. For just two planets, the force of gravity is proportional to each planet’s mass and inversely proportional to the square of the separation distance. When you consider the entire solar system, the force on each planet is the sum of the forces imposed by every other planet. Using Newton’s Law of Motion, the position of Earth, Mercury, Jupiter, and every other planet in our solar system is:

where ui indicates the position of planet i, t = time, mi = mass of planet i, and C = Newton’s constant of gravity. Note that ui is a vector with three components, the planet’s x, y, z coordinates.

This equation, although it involves a more complicated force, is somewhat similar to that of the tossed stone. But there are differences that make the solution orders of magnitude more difficult. For instance, you can’t solve for the position of a single planet by itself; you need the position of all other planets to determine the force acting on the one of interest.

More perplexing is the fact that there doesn’t seem to be an analytical solution. Newton showed that if only two planets were in space, they would travel in elliptical, parabolic, or hyperbolic orbits around the center of gravity. Since then, despite enormous research efforts, most mathematical issues related to systems of three or more planets remain unresolved; to this day, no one has found an analytical solution to the planetary equations of motion.

Partial victory

We’ve defined just two problems so far, both on the basis of a single independent variable. Many physical processes, however, involve multiple independent variables. Analyzing phenomena of this sort requires a more advanced form of math known as partial differential equations.

One of the most well-known partial differential equations of all time is the Poisson equation. Developed in the early 19th century, it applies to many things observed in physics including the gravitational field of a planetary system. In the previous example we assumed the masses of the planets to be located at infinitely small points. A more realistic analysis accounts for distributed mass, which is exactly what Poisson’s equation does:

Poisson’s equation also applies to electromagnetics, geophysics, and chemical engineering. Other partial differential equations of note are the heat-transfer equation

These and other partial differential equations — including the Schrödinger equation in quantum mechanics, the Navier equation in structural mechanics, and the Navier-Stokes and Euler equations in fluid dynamics — are fundamental to all sciences and have greatly aided the development of modern day technology.

Nature’s curve

Partial differential equations are typically easier to solve if all terms involving the function of interest and its derivatives can be reduced to a linear combination with independent coefficients. If that’s the case, the equation is said to be linear; otherwise it’s nonlinear.

The distinction between linear and nonlinear partial differential equations (PDEs) is crucial when it comes time to solve them. Many linear PDEs — including Poisson’s equation, the heat equation, the wave equation, and the equation describing the tossed stone — reduce to an exact form by proper manipulation. Some of the techniques include separation of variables, superposition, Fourier series, and Laplace transforms.

In contrast, nonlinear PDEs — such as the Navier-Stokes equation and those that express planetary motion — are seldom solvable in analytical form. Instead you must rely on numerical solutions and approximations. Many algorithms for this purpose exist today and are quite accurate even when compared to exact mathematical solutions.

Three centuries ago Leibnitz envisioned as much, predicting the eventual development of a general method that could be used to solve any differential equation.

It was in pursuit of such dreams as this, and the more pressing needs of navigators and astronomers, that early mathematicians looked for easier calculation methods. They devoted centuries to creating tables, notably of logarithms and trigonometric functions, that allowed virtually anyone to quickly and accurately calculate answers to a variety of problems.

The tables, however, were only a stepping stone. Even in his time Leibnitz realized the benefits of automating math with machines. “Knowing the algorithm of this calculus,” he said, “all differential equations that can be solved by a common method, not only addition and subtraction but also multiplication and division, could be accomplished by a suitably arranged machine.”

Continue to Page 2Crunch time

Devices that “carry out the mechanics of calculations” emerged just like Leibnitz predicted, but the advent of digital computers is what finally put the process of developing computational solutions over the hurdle. In the decades since, researchers have developed many programs that approximate continuous functions using repetitive calculations. The techniques, by necessity, have relied on finesse rather than brute force.

To appreciate the challenge posed by even a simple differential equation, consider the analysis of a diode. Here, the quantities of interest are the electric potential of the valence band and bipolar (electron- hole) current in the p-n junction.

The first step is to model the currentvoltage (I-V) relationship. Expressed in terms of partial differential equations, the I-V response is based on the conservation of charge and the relation between field strength and the concentration of electrons and holes. The full I-V characteristics are obtained by post-processing a family of solutions for a series of applied voltages.

Because there isn’t a nice, neat formula describing all points in the diode at all times, the problem has to be solved by breaking the region of interest into a large number of small cells. For a simple diode, the solution may involve tens of thousands of cells strategically concentrated in regions where the electric field changes rapidly.

Focusing on small elements simplifies calculations, but it also means that all values depend on those of the surrounding cells. What’s more, if the field distribution changes appreciably with applied voltage, it becomes necessary to regenerate all the elements for each voltage step.

Generating and solving thousands upon thousands of interrelated equations like this can take billions of arithmetical operations. Just two decades ago this would have been too much for almost any computational machine, but with computer power doubling every 18 months — by some estimates our problem-solving capacity has improved by a factor of a million over the last 30 years — we now have the ability to solve these types of problems on our “suitably arranged machines,” otherwise known as desktop PCs.

Methods keep pace

Computational power isn’t the only thing improving. Recent developments in numerical methods have also contributed to technological progress.

One of the more notable landmarks in terms of numerical methods was the invention of the fast Fourier transform (FFT) in the beginning of the 1960s. FFTs have slashed, by orders of magnitude, the time needed to decompose an arbitrary waveform into its constituent frequencies. They have literally changed our view about digital signal processing, and they’re at the center of innumerable algorithms for robotics, imaging, vibration control, and error correction.

Although there’s no inherent link between the frequency domain and differential calculus, the two seem to complement each other quite well. Indeed, with FFTs and differential math we can now quantitatively describe phenomena as varied as electron clouds, clusters of galaxies, and membrane deflection. Furthermore, FFTs are at the heart of fast Poisson solvers, which make it possible, for example, to run simulations that account for millions of galaxies. With such power, scientists can now examine various scenarios for the evolution of the universe, running their simulations in fast-forward and reverse.

Trying triangles

In more recent years, another numerical method has been enlisted in solving differential equations — the finite element method. It first appeared about 50 years ago as the standard tool for structural analysis. Since then it has evolved into a technology with general application.

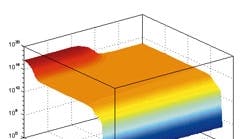

To see what the finite element method brings to PDEs, consider the deflection of a thin membrane subject to normal loads. The first step in the solution is to divide the region into a set of triangles. Triangles are useful for approximating irregular domains and they address the need of numeric methods to discretize the continuous world.

With finite elements, the solution simplifies to determining the position of the corners of each triangle. Think of the loaded membrane as a set of plane triangular facets joined along their edges, forming a dome. The general mathematical approach is to compute the corner positions, or heights, that make the dome closest to the exact deflection shape. This is obviously less taxing than trying to come up with an analytical solution.

Moreover, the procedure is quite systematic — generate elements, compute solution, and display results — so it lends itself to computer implementation. Graphically oriented operating environments and off-the-shelf application software only make the process easier.

Moving to multiphysics

As interesting as the previous examples may seem, the only physical phenomenon they account for is motion. Nature, however, is more complicated that. As soon as any system starts to move, it begins to heat. If you want to consider dynamics, therefore, you need to think about heat transfer. And since heat transfer usually influences other physical properties — electrical conductivity, chemical reaction rate, magnetic reluctance — you’ve got a lot of work ahead of you if it’s accuracy you’re after.

A computational approach that simultaneously accounts for all the phenomena related to an event is called multiphysics. This, too, is possible with today’s computer hardware and software.

To appreciate the need for a multiphysics approach to numerical analysis, imagine trying to model the stresses in a cracked heatexchange tube like you might find in a pulp mill. The tubes consist of two concentric layers of different stainless steels. Assume the culprit is a flaw in the joint between the inner and outer tubes.

A one-dimensional model will show that the flaw impedes heat flow, setting up a temperature difference that creates thermal stresses. With the addition of a dynamic modeler, one can also show that the stresses propagate the crack along the interface, causing the temperature difference to increase even more. The interaction of heat transfer and mechanics, and its cumulative effect, is by no means limited to heat exchangers, suggesting that multiphysics may one day be the standard computational approach.

Svante Littmarck is President of Comsol Inc., Burlington, Mass., and one of the founders of parent company Comsol AB.

Special thanks to: Dr. Lars Langemyr of Comsol AB, Prof. Jesper Oppelstrup of The Royal Institute of Technology in Stockholm, Sweden, and Paul Schreier, consultant.