The failure of a cement foam seal on the well set off a sequence of events resulting in oil and gas coming up the well casing and exploding into flames. Halliburton had recommended to the British oil company BP that the well included 21 metal centralizing collars to stabilize the cementing. But BP only used six. The government says Halliburton ordered its employees to destroy computer simulations showing little difference between using six and 21 collars.

Every engineer in the country should be upset by this ruling. Without debating the results of the analysis, the decision has clear implications for every designer doing stress analysis.

Taking a brief detour into engineering history, the reason for analysis is to avoid “build-and-bust” testing. Engineers want to model design performance without having to build an actual model. At the risk of sounding obvious, the reason for constructing a math model is to see whether it breaks under the design load conditions. If failure conditions come too close to the expected use mode, a responsible engineer will rework the design to make failure less likely.

Similarly, engineers construct math models to ensure the design does not fail. Bridges, planes, nuclear plants, and most forms of transportation have zero as the allowable failure rate. Models of these entities are frequently tested to beyond failure to ensure “normal” use will never cause a catastrophe. Confidence in the design only comes from simulating all possible failures. Engineers should not be penalized for exploring how something can break.

That said, what is your normal course of action if your analysis yields unfavorable results? Typically, you would “fix” the model to make sure the stresses meet your internal criteria. You may even perform some physical testing.

But it is infeasible to store every model and analysis result. A typical optimization run might involve running thousands of models for hundreds of parts. This process is disk intensive, so you would typically delete the bulk of the models without even a glance at the results.

Now suppose you were held responsible for noticing every single “failed” model. Is it even possible to examine all the models? And how do you define failure? Do you want an attorney and a senior analyst looking at each model to determine if it is worthy of deletion?

That is precisely the point that is troubling about the Halliburton decision. Also consider that a talented engineer will always perform a literal back-of-the-envelope calculation to verify the stresses a computer model predicts. But there will likely be no record of this quick check, nor an explicit link from this computation to any specific project. Can you imagine being required to log and record each computation and assign it to a specific project?

Could an engineer ever refuse to run a structural analysis? In light of the Halliburton decision, perhaps so: If the back-of-the-envelope model fails, the rational engineer would refuse to do the analysis. Knowing that the outcome will be bad, and that a bad outcome may result in a legal entanglement, would any reasonable person do something that would get them sued?

The Halliburton fine will have a chilling effect on the engineering profession. It opens up the possibility that experiments to test limits or even to check normal behavior could be viewed as “evidence” in a lawsuit. And your company could be fined if you somehow delete this data, even accidentally.

People unfamiliar with finite-element analysis have the mistaken impression that it produces “an answer.” Depending on a host of factors that only a well-seasoned structural analyst can understand, FEA can reveal a vast range of “good” or “bad” answers to any problem. It’s often hard enough explaining the answers to a boss; the chances of explaining them to an attorney could well be diminishingly small.

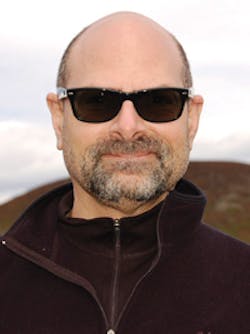

James I. Finkel

About the Author

James I. Finkel

Engineering and Operations Manager

Voice Your Opinion!

To join the conversation, and become an exclusive member of Machine Design, create an account today!

Leaders relevant to this article: